Ten years after the AlphaGo match, Enhans returned to the same venue and created a different kind of moment. Where AI once competed, it now collaborates.

In a conversation with Lee Sedol, the system naturally identified what the user cared about, and the agents began planning a Go app on their own. Without a single line of code entered, the app was complete in just 18 minutes.

Execution that began without a command. The reason this was possible lies in how the agents collaborate: the AI OS.

Why Systems Become Unstable as More Agents Are Added

Connecting multiple agents seems like it should unlock more complex tasks. In practice, however, enterprise environments tell a different story. More agents means more complex communication, and longer chains mean more points where the flow can break.

Most multi-agent systems operate through direct agent-to-agent calls. A calls B, B calls C. This works when there are three agents.

Scale that to five, ten, or more, and a single agent failure cascades through the entire system. When errors occur, tracing the exact cause becomes difficult.

Enhans designed a new protocol from the ground up to solve this problem.

Pheromone Protocol: Leave a Signal, Agents Respond on Their Own

Enhans drew inspiration from swarm intelligence.

Swarm intelligence is the phenomenon where individual agents, through local interactions, produce collectively intelligent behavior. Ant colonies are the classic example. When an ant finds food, it leaves a pheromone trail. Other ants detect that signal and respond on their own. Collaboration happens without any central command.

Enhans applied this principle directly to agent communication. When a file's contents change, an LLM inference that uses that file as a prompt triggers automatically. All inter-agent operations run on top of this rule.

Agent A completes a task and writes the result to a file (pheromone.md). A File Watcher detects the change in real time. Agent B reads the file, and its LLM inference triggers automatically. Agents never call each other directly. They leave signals in the environment and respond to those signals.

From Request to App: The Demo Walkthrough

At the Lee Sedol demonstration, the Go app was built like this.

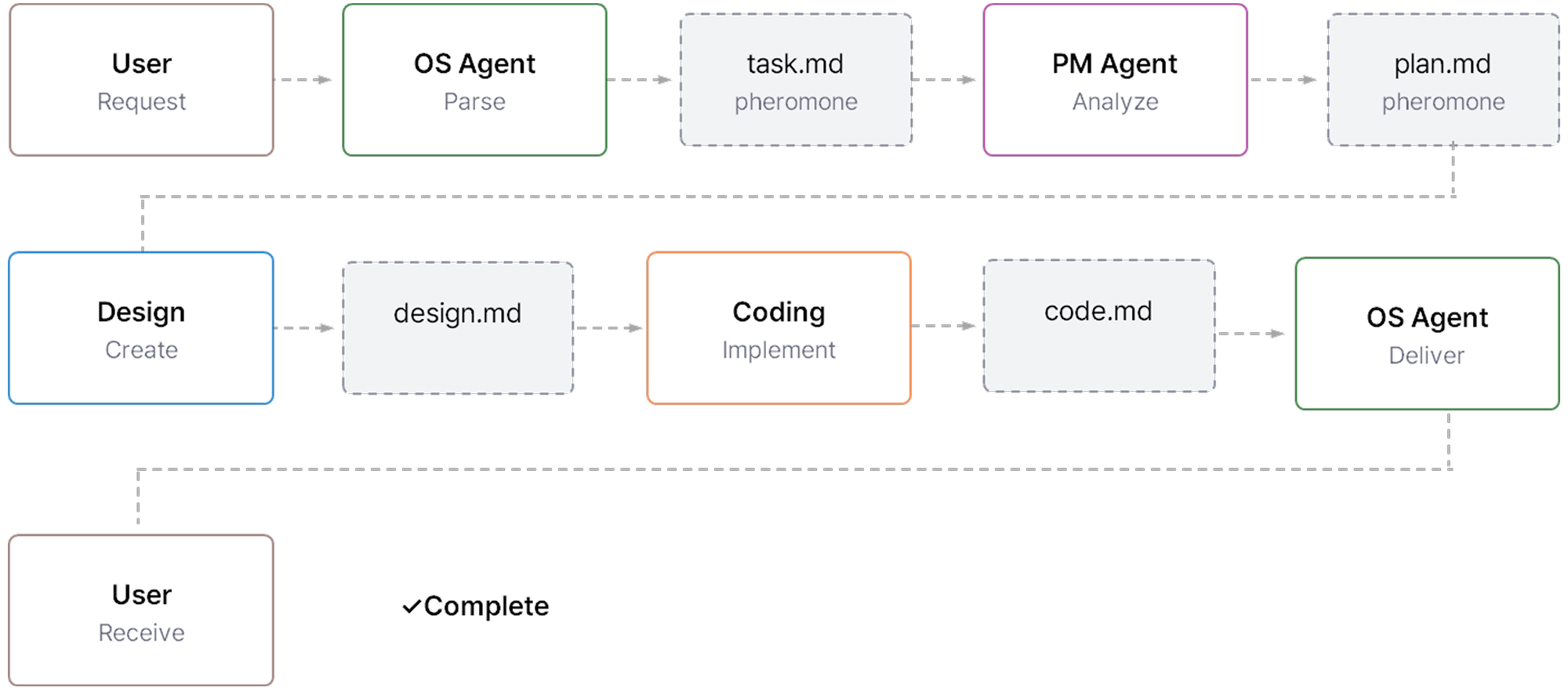

A user request comes in and the OS Agent parses it, writing to task.md. The PM Agent detects this and writes plan.md. The Design Agent reads plan.md and produces design.md. The Coding Agent reads design.md and implements the code. The OS Agent delivers the final result to the user. Every step was connected through file-based signals.

This protocol requires no separate message broker, database, or API server. Markdown files serve as logs that humans can read directly, and state recovery after a system restart is possible.

As the number of agents grows, the structure stays clean. More agent activity means more information accumulates in the environment, producing a system-wide learning effect over time.

3 Side Brain: Each Agent Holds Reasoning, Action, and Memory

With inter-agent communication handled, the question becomes how each agent is designed internally. Enhans standardized agents into three Brain types.

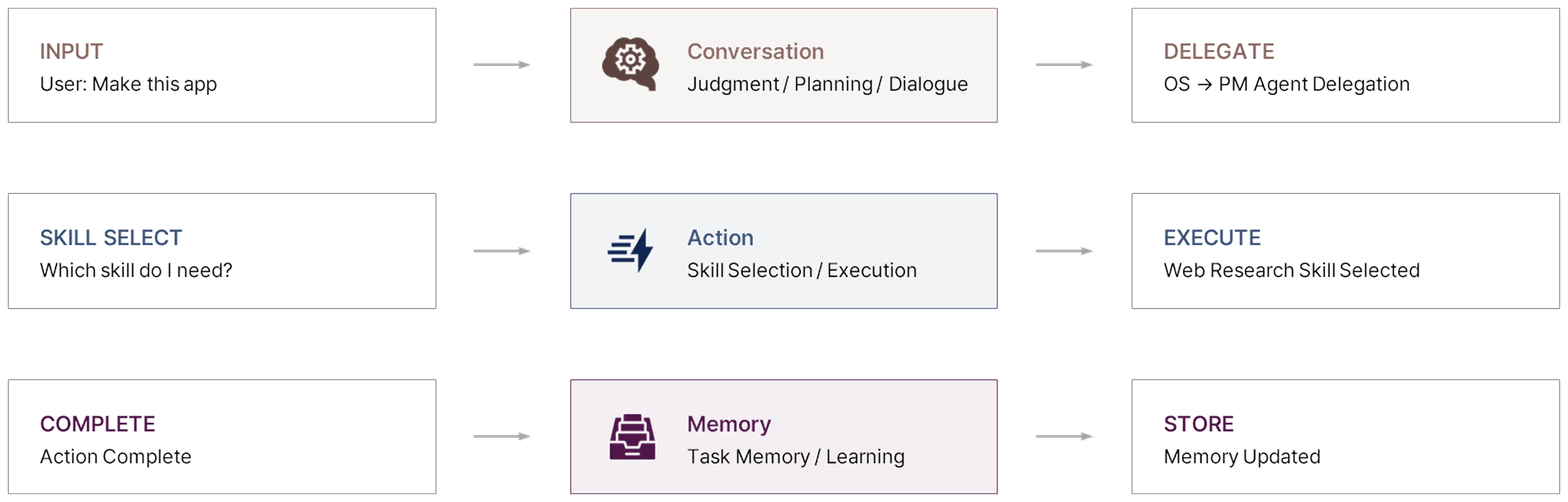

The Conversation Brain handles natural language communication with users or other agents, generating dialogue and deciding the next action. The Action Brain selects and executes the appropriate skill, tool, or function for an incoming request. The Memory Brain summarizes task results, manages long-term memory, and updates context so it can be used in future reasoning.

Each agent is composed of these Brain types, combined as needed. The OS Agent is built around the Conversation Brain, handling user interaction and Sub Agent delegation. Sub Agents execute their skills through the Action Brain and reflect results into long-term context through the Memory Brain.

Each agent becomes an independent execution unit with its own reasoning, action, and memory. It judges, executes, and remembers on its own.

How Signals Flow Between Agent Brains

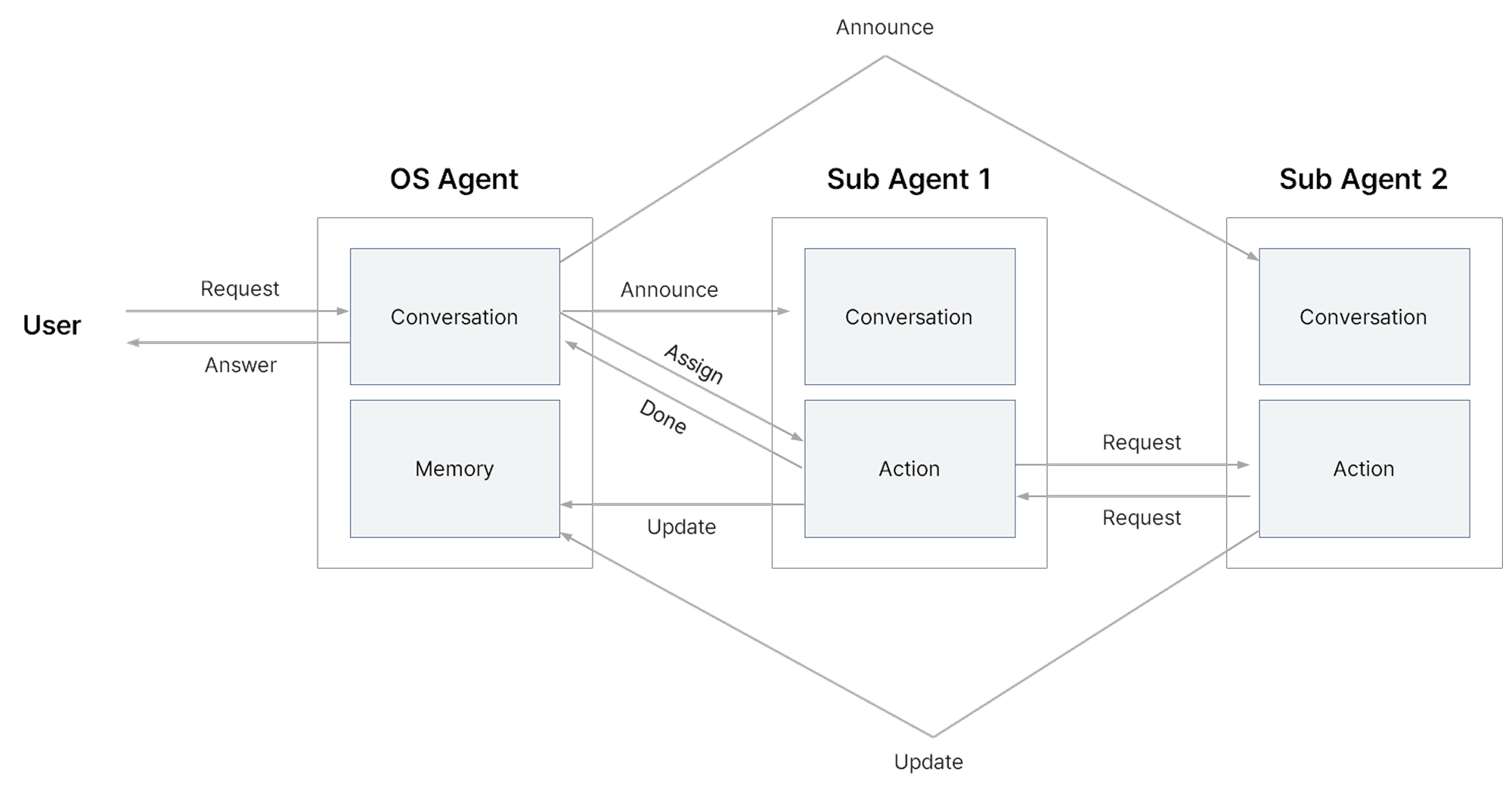

The OS Agent operates around the Conversation Brain, handling user dialogue while delegating tasks to Sub Agents. When delegating, requests are directed to the Sub Agent's Action Brain.

The Sub Agent's Action Brain selects the appropriate skill, tool, or function and carries out the task. Once complete, results are reported back to the OS Agent's Conversation Brain. When a Sub Agent needs to make an additional request to another Sub Agent, that request goes directly to the receiving Sub Agent's Action Brain.

Task results are delivered not only to the OS Agent's Conversation Brain but also to the Memory Brain, to update long-term memory. That updated memory is then used when the OS Agent generates its next response.

In certain cases, an Announce function operates from the OS Agent's Conversation Brain to a Sub Agent's Conversation Brain. This Announce function is covered in more detail in the next section.

How the OS Agent Splits Tasks and Assembles Its Team

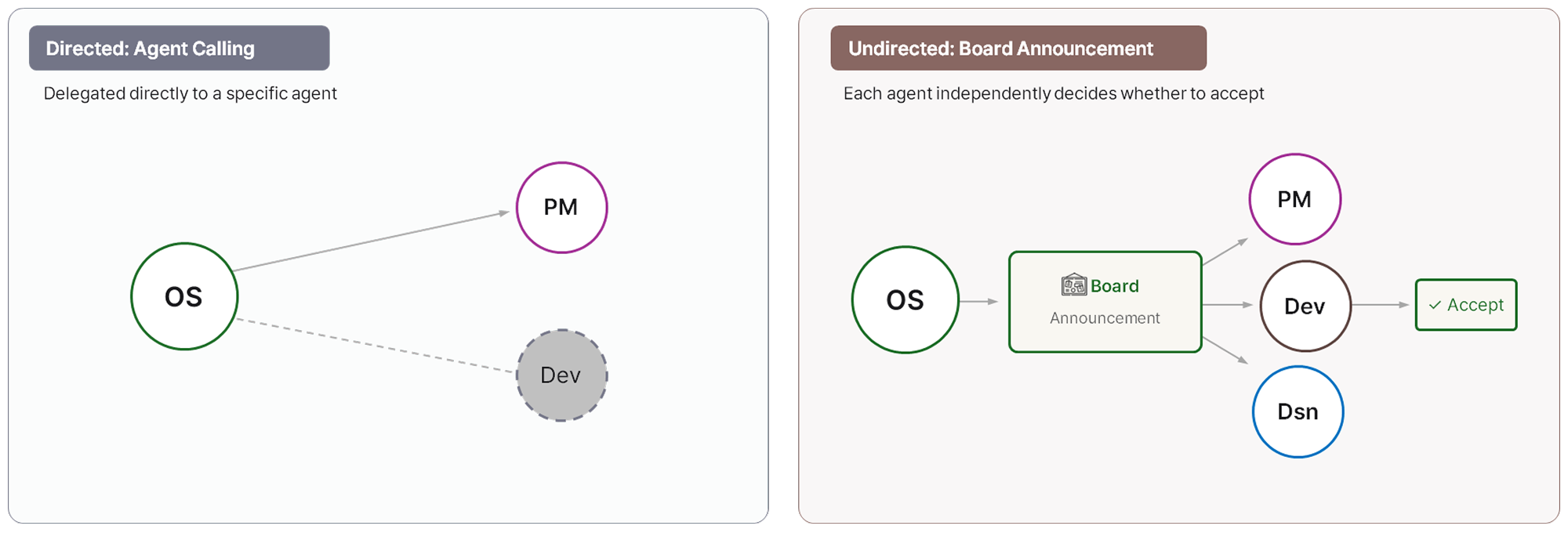

When the recipient is clear, the task is delegated directly to a specific agent through Agent Calling. With this approach, the OS Agent focuses on judgment and coordination while each agent handles execution. If an error occurs at one point, the overall flow does not stop. Agents independently determine their next action and continue the task. This structure also enables self-evolving behavior, where performance improves over time.

When the recipient is unclear, tasks are handled through Board Announcement. The task is broadcast to all agents simultaneously, and each agent independently decides whether it can handle it. This ensures tasks are completed even when ownership is ambiguous.

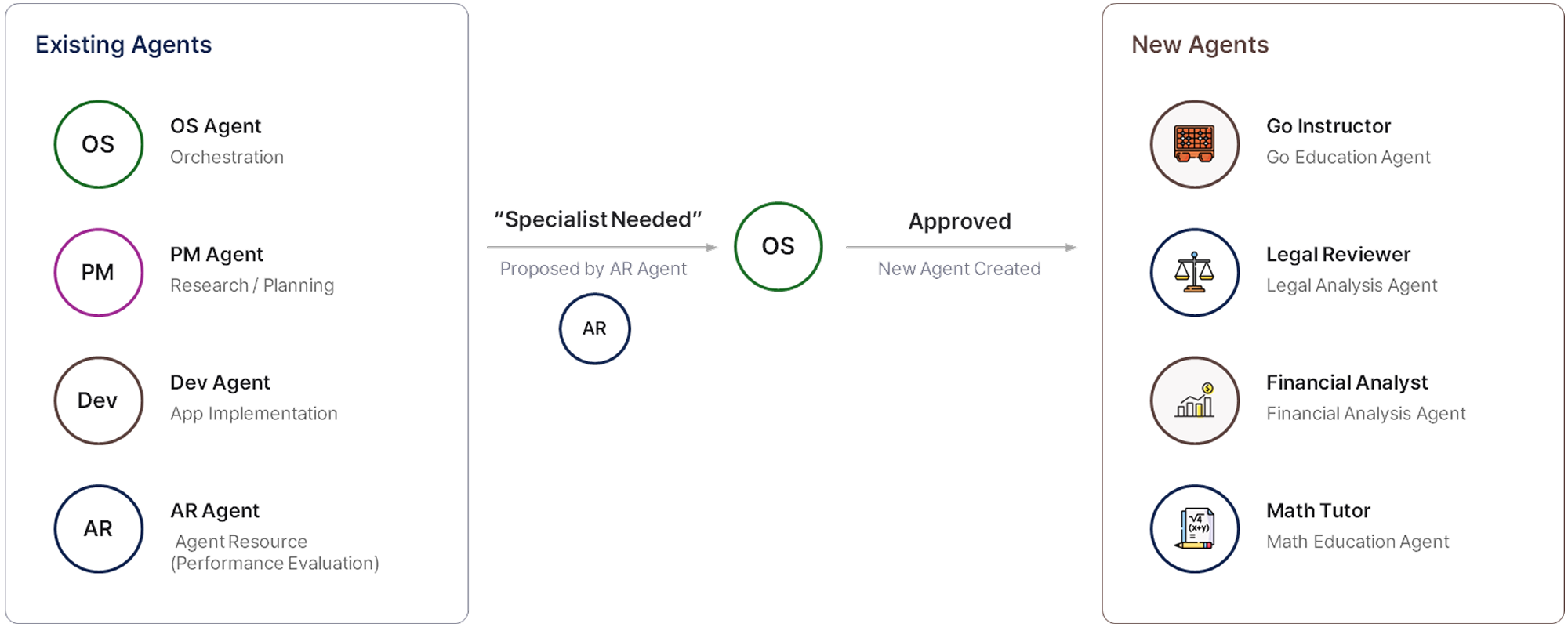

At the Go app demonstration, a Go education specialist agent was created dynamically on the spot. This structure, called Agent Spawning, generates agents only when a specific task requires them. They are added like plugins on top of the existing system without separate deployment. The right expertise becomes available exactly when a specific task calls for it.

AI OS: The Protocol That Defines What Agents Can Do

The answer to "what tasks can multi-agent systems handle" comes down to one thing: how the system is structured.

How agents communicate, how they delegate, how they execute. The architecture behind agents that actually collaborate is what determines the scope of what is possible.

In the next part, we will cover how this system connects to users and how it presents itself, including the Bridge layer and interface design.

in solving your problems with Enhans!

We'll contact you shortly!